Auto-Learning Machines Before the Age of Computers

Auto-Learning Machines Before the Age of Computers represent a fascinating, often overlooked chapter in technological history.

Anúncios

Long before Silicon Valley and neural networks, pioneering minds grappled with the concept of creating intelligent, adaptive mechanisms.

These forgotten ideas prove that the impulse to create artificial intelligence is ancient.

These analog ancestors were not simply calculators; they were designed with feedback loops and adaptive components, anticipating modern machine learning principles.

Studying these early attempts helps us appreciate the philosophical and engineering challenges inherent in simulating thought.

Anúncios

What Were the Foundational Concepts Driving Early Machine Learning?

The concept of a machine capable of learning or adapting predates the electronic computer by centuries, rooted in philosophical debates about automatons and the nature of intelligence.

These early concepts focused heavily on mechanical or electrical feedback mechanisms to modify behavior.

Pioneers sought to mimic the simplest forms of biological conditioning, proving that a machine could adjust its future output based on the success or failure of its past actions.

This marked a profound shift from purely deterministic mechanisms to adaptive systems.

++ The Portable Nuclear Reactor NASA Built in the 1960s

Who Were the Key Thinkers Behind Adaptive Mechanisms?

A key figure in this pre-digital era was Claude Shannon, the “father of information theory.”

Although he lived into the computer age, his early work in the 1950s explored mechanical learning using simple electrical circuits and even a mechanical mouse.

Shannon’s work demonstrated that complex learning behavior could arise from remarkably simple rules and hardware.

His maze-solving mouse, Theseus, showed genuine trial-and-error learning without relying on pre-programmed maps, a feat of early cybernetics.

Also read: The Forgotten Logograms of the Olmec Civilization

How Did Early Ideas of Feedback Influence Self-Correction?

The field of Cybernetics, formally established in the 1940s by Norbert Wiener, provided the theoretical framework for these machines.

Cybernetics studies the control and communication in both the animal and the machine. It formalized the concept of the feedback loop.

This concept meant a machine’s output could be measured against a goal.

Any deviation would generate an “error signal” that fed back into the system, causing a self-correction. This essential loop is the core mechanism of all adaptive technology, including today’s AI.

Which Analog Devices Demonstrated True Pre-Computer Learning?

While the term “learning” is used differently today, several pre-computer devices exhibited genuine adaptive behavior, proving the viability of Auto-Learning Machines Before the Age of Computers.

These devices used physical or electrical states to store information derived from experience.

These forgotten inventions served as critical proof-of-concept models.

They showed that intelligence was not solely reliant on high-speed digital processing but could be simulated through clever engineering of materials and mechanisms.

Read more: When Wind Power Was the Future — In 1930

What Was the Significance of the Electro-Mechanical Game Players?

A compelling example is the “Machine that Plays Tic-Tac-Toe” (sometimes called The Nimatron or similar devices), created in the 1940s.

These devices used switches and relays to store the outcomes of past games. The machine started poorly but quickly learned optimal play.

A simple Tic-Tac-Toe machine used relays to represent the game board states.

If the machine lost a game, the set of relays representing the losing move would be mechanically or electrically disabled, forcing the machine to try a different move next time that state occurred. This is a clear, physical instance of reinforcement learning.

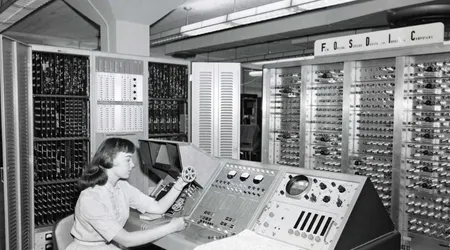

How Did Adaptive Control Systems Anticipate Modern AI?

Adaptive control systems, widely used in wartime and early industrial automation, represent another class of early learning machines. These systems adjusted the parameters of a mechanism based on environmental changes.

A classic case is the adaptive autopilot system in early aviation. It learned to compensate for factors like wind shear and varying air density by adjusting control surfaces.

The system adapted its control response based on past measurement errors, improving its performance in real-time.

Why Were These Ideas Overshadowed by the Digital Revolution?

Despite their conceptual brilliance, these early Auto-Learning Machines Before the Age of Computers were quickly relegated to historical footnotes once digital computing arrived.

The limitations of physical hardware and the superior scalability of software made the transition inevitable.

Analog and mechanical complexity simply could not compete with the speed, memory, and flexibility of digital computation.

The theoretical possibilities of intelligence exploded once it was unchained from physical switches and relays.

What Was the Critical Limitation of Analog Learning?

The primary limitation of analog and mechanical learning systems was their inability to scale.

Every additional piece of “knowledge” or complexity required more physical components more wires, more relays, or more dedicated mechanical parts. This rapidly made them unwieldy and impractical.

Furthermore, these systems were inherently slow. Information traveled at the speed of electricity through a wire or the speed of a mechanical part moving.

Digital systems, manipulating abstract bits, offered a speed and memory capacity that rendered analog systems obsolete overnight.

Why is the Perceptron a Key Bridge Between Eras?

The Perceptron, developed by Frank Rosenblatt in 1957, serves as a crucial conceptual bridge.

While a digital concept, it was initially implemented using an electro-mechanical machine (Mark I Perceptron) which attempted to simulate a neural network using physical potentiometers.

The Perceptron’s physical implementation used motor-driven potentiometers to store weighted values. This machine could learn to classify patterns (e.g., distinguishing between a square and a triangle).

When it made a mistake, the error signal physically drove the motor to adjust the potentiometer settings, effectively updating the ‘weight’ and correcting the machine’s knowledge.

What are the Modern Echoes of These Forgotten Inventions?

The foundational ideas developed by early pioneers feedback loops, adaptive control, and trial-and-error conditioning are not merely historical relics; they are the conceptual pillars of modern machine learning.

Auto-Learning Machines Before the Age of Computers laid the theoretical groundwork.

Today’s complex algorithms owe a profound debt to these simpler, mechanical precursors. The concept of reinforcement learning, for instance, is the direct descendant of Shannon’s maze-solving mouse.

How Does Reinforcement Learning Mirror Early Cybernetics?

Reinforcement Learning (RL), the technique powering modern AIs that master games like Chess or Go, is a complex, digitized version of early cybernetic principles.

The machine learns by interacting with its environment, receiving rewards for correct actions and penalties for incorrect ones.

This process is fundamentally the same as disabling a losing relay in the Tic-Tac-Toe machine: the system optimizes its behavior based on the numerical feedback signal.

The complexity has increased exponentially, but the core logic of adaptive feedback remains identical.

Why Must We Revisit the Analog Era for Future AI Design?

Revisiting the analog era offers valuable, counter-intuitive insights.

While digital systems are supreme for speed, research into neuromorphic computing which aims to mimic the physical structure of the brain using analog circuits shows we may still learn from non-binary, energy-efficient processing.

The history of learning machines is like comparing a sailing ship and a jet plane.

The jet plane is faster (digital). But the sailing ship (analog) utilized simpler, more energy-efficient physical principles (wind/feedback) to achieve its goal. Future AI may need the speed of the jet but the energy efficiency of the sail.

| Early Adaptive Mechanism | Era | Principle of Learning | Modern Counterpart |

| Shannon’s Theseus Mouse | 1950 | Trial-and-Error (Physical Disincentives) | Reinforcement Learning (RL) |

| Adaptive Autopilot | 1940s | Error Correction via Feedback Loop | Adaptive Control Systems, PID Controllers |

| Perceptron (Mark I) | 1957 | Weight Adjustment via Physical Potentiometers | Modern Neural Networks (Weight Optimization) |

| Electro-Mechanical Game Players | 1940s | State Prohibition (Eliminating Losing Moves) | Q-Learning and Policy Optimization |

Conclusion: The Unsung Pioneers of Intelligence

The story of Auto-Learning Machines Before the Age of Computers is a testament to human ingenuity that transcends technological constraints.

These forgotten engineers and theorists laid the intellectual and cybernetic groundwork that defines the AI era of 2025. Their work proves that the concept of intelligence is fundamentally about adaptability, not just computation speed.

We must remember these pioneers whose analog, mechanical ideas paved the path for today’s digital revolution.

Their legacy reminds us that breakthroughs often require a willingness to experiment with the simplest possible components to solve the most complex problems.

What forgotten analog principle do you think holds the key to the next AI breakthrough? Share your thoughts in the comments below.

Frequently Asked Questions

What is the key difference between an automaton and a learning machine?

An automaton (like an ancient clockwork doll) performs a fixed sequence of pre-programmed actions.

A learning machine can modify its sequence or internal state based on feedback from its environment, enabling it to improve its performance over time without human reprogramming.

Was Alan Turing involved in the concept of pre-computer learning machines?

While Alan Turing is famous for the theoretical Turing Machine, he also heavily explored the concept of machine intelligence, proposing the Turing Test in 1950.

His theoretical and philosophical work was crucial in defining what machine intelligence should look like, inspiring many of the physical experiments that followed.

Did any of these analog learning machines ever become commercially successful?

The early concepts themselves were mostly proof-of-concept models used in research.

However, the derivative principles, specifically adaptive control systems, became highly successful in commercial and military applications, such as factory automation, sophisticated missile guidance, and stable flight controls in aircraft.

Why is the concept of a feedback loop so important?

The feedback loop is essential because it closes the gap between action and consequence. It provides the mechanism for self-correction.

Without feedback, a machine is incapable of adjusting its behavior, meaning it cannot learn from its mistakes or adapt to a changing environment.

What happened to Shannon’s famous maze-solving mouse, Theseus?

Shannon’s original mouse, Theseus, which was a mechanical marvel built from relays and solenoids, has been preserved.

It now resides in the MIT Museum, serving as a powerful, tangible reminder of the origins of Auto-Learning Machines Before the Age of Computers and the field of cybernetics.